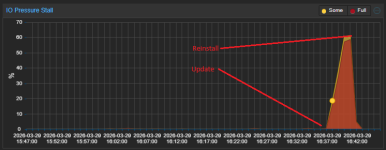

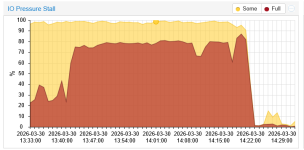

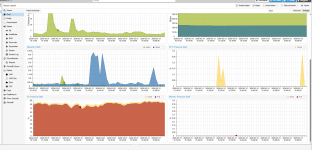

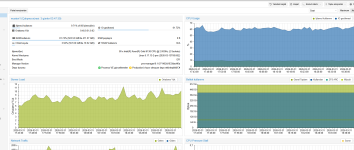

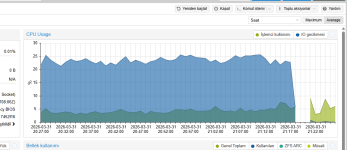

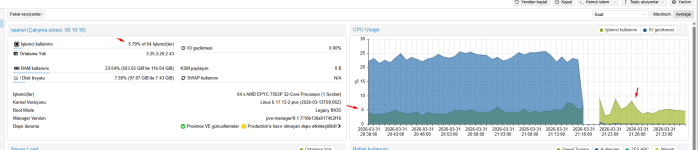

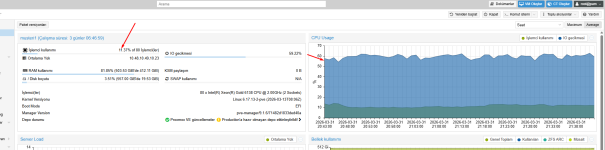

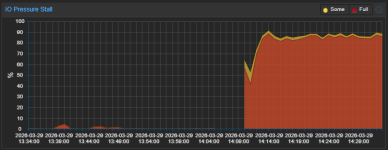

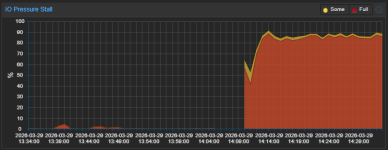

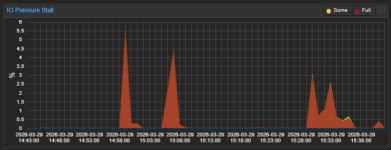

Applying patches to the Test Repository may have caused severe I/O delays and I/O pressure stalls.

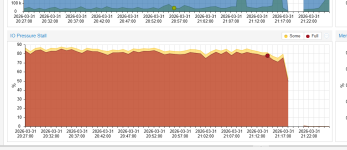

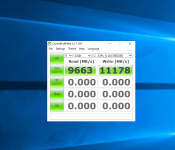

The I/O pressure star value has reached nearly 100, but I can't see the load when I run `zpool iostat 1`.

If you reinstall PVE using `proxmox-ve_9.1-1.iso`, the value drops to between 0 and 1 (or at most around 5), but the problem recurs when you apply the test repository.

If you reinstall PVE using `proxmox-ve_9.0-1.iso` and then apply the non-subscription repositories, this issue does not occur.

I haven’t been able to pinpoint the cause yet because I don’t have time to reapply the patch right now due to other tasks, but I’ve decided to use the No-Subscription repository.

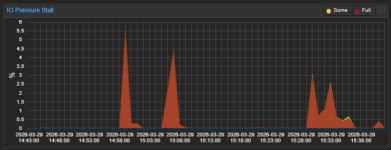

So far, after installing from `proxmox-ve_9.0-1.iso` and applying the following patch, the issue has not recurred.

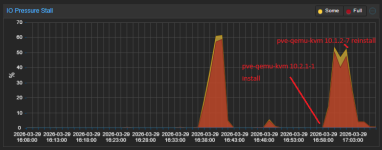

The I/O pressure star value has reached nearly 100, but I can't see the load when I run `zpool iostat 1`.

If you reinstall PVE using `proxmox-ve_9.1-1.iso`, the value drops to between 0 and 1 (or at most around 5), but the problem recurs when you apply the test repository.

If you reinstall PVE using `proxmox-ve_9.0-1.iso` and then apply the non-subscription repositories, this issue does not occur.

I haven’t been able to pinpoint the cause yet because I don’t have time to reapply the patch right now due to other tasks, but I’ve decided to use the No-Subscription repository.

So far, after installing from `proxmox-ve_9.0-1.iso` and applying the following patch, the issue has not recurred.

Code:

proxmox-ve: 9.1.0 (running kernel: 6.17.13-2-pve)

pve-manager: 9.1.6 (running version: 9.1.6/71482d1833ded40a)

proxmox-kernel-helper: 9.0.4

proxmox-kernel-6.17: 6.17.13-2

proxmox-kernel-6.17.13-2-pve-signed: 6.17.13-2

proxmox-kernel-6.14: 6.14.11-6

proxmox-kernel-6.14.11-6-pve-signed: 6.14.11-6

proxmox-kernel-6.14.8-2-pve-signed: 6.14.8-2

ceph-fuse: 19.2.3-pve1

corosync: 3.1.10-pve1

criu: 4.1.1-1

frr-pythontools: 10.4.1-1+pve1

ifupdown2: 3.3.0-1+pmx12

intel-microcode: 3.20251111.1~deb13u1

ksm-control-daemon: 1.5-1

libjs-extjs: 7.0.0-5

libproxmox-acme-perl: 1.7.0

libproxmox-backup-qemu0: 2.0.2

libproxmox-rs-perl: 0.4.1

libpve-access-control: 9.0.5

libpve-apiclient-perl: 3.4.2

libpve-cluster-api-perl: 9.1.1

libpve-cluster-perl: 9.1.1

libpve-common-perl: 9.1.8

libpve-guest-common-perl: 6.0.2

libpve-http-server-perl: 6.0.5

libpve-network-perl: 1.2.5

libpve-rs-perl: 0.11.4

libpve-storage-perl: 9.1.1

libspice-server1: 0.15.2-1+b1

lvm2: 2.03.31-2+pmx1

lxc-pve: 6.0.5-4

lxcfs: 6.0.4-pve1

novnc-pve: 1.6.0-3

openvswitch-switch: 3.5.0-1+b1

proxmox-backup-client: 4.1.5-1

proxmox-backup-file-restore: 4.1.5-1

proxmox-backup-restore-image: 1.0.0

proxmox-firewall: 1.2.1

proxmox-kernel-helper: 9.0.4

proxmox-mail-forward: 1.0.2

proxmox-mini-journalreader: 1.6

proxmox-offline-mirror-helper: 0.7.3

proxmox-widget-toolkit: 5.1.8

pve-cluster: 9.1.1

pve-container: 6.1.2

pve-docs: 9.1.2

pve-edk2-firmware: 4.2025.05-2

pve-esxi-import-tools: 1.0.1

pve-firewall: 6.0.4

pve-firmware: 3.18-1

pve-ha-manager: 5.1.1

pve-i18n: 3.6.6

pve-qemu-kvm: 10.1.2-7

pve-xtermjs: 5.5.0-3

qemu-server: 9.1.4

smartmontools: 7.4-pve1

spiceterm: 3.4.1

swtpm: 0.8.0+pve3

vncterm: 1.9.1

zfsutils-linux: 2.4.1-pve1Attachments

Last edited: