Hi,

We have a 3 node cluster, where we have configured Proxmox with Ceph. Following are the specifications of the each node:

Memory (RAM): 384GB

vCPU: 80 (Total)

NIC card: 2 x25G (Dedicated to ceph traffic only) - However the DAC cables are of 10G

Other NIC cards: are for management network, cluster etc.

OSD: 4 xMicron 7450Max participating as OSD.

We tested each drive with the following command and got around 55K to 60K IOPS for block size 4k.

fio --ioengine=libaio --filename=/dev/sdb --direct=1 --sync=1 --rw=write --bs=4k --numjobs=1 --iodepth=1 --runtime=60 --time_based --name=fio

We have run fio simultaneously on 3 separate disks and got the above result.

Now, we have configured CEPH networking with (2 x25G Ports) in a full-mesh Routed with fallback mechanism by following the link - https://pve.proxmox.com/wiki/Full_Mesh_Network_for_Ceph_Server

As we mentioned earlier, these NIC ports are connected with 10G DAC. So effectively, we are getting 20g traffic when we do iperf test.

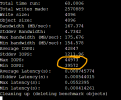

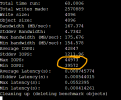

After setting up Ceph with 4 NVMe drives on each of the 3 nodes, and when we do the rados benchmarking, we get the following result, which is shocking:

rados bench -t 32 -b 4096 -p NVMepool1 60 write

The IOPS we are getting is around 45K. However, we have a total of 12 NVMe drives. If 55K IOPS we are getting from each. Therefore, the total IOPS is 55K x12 = 660K. Should we not get 20% to 30% of the total IOPS, which is 130K?

Why are we getting too low iops?

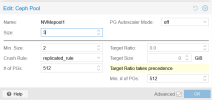

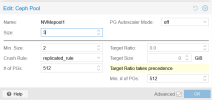

Let me share one more detail about the pool:

I tried making pool size from 3 to 2. but did not find any significant change.

We have a 3 node cluster, where we have configured Proxmox with Ceph. Following are the specifications of the each node:

Memory (RAM): 384GB

vCPU: 80 (Total)

NIC card: 2 x25G (Dedicated to ceph traffic only) - However the DAC cables are of 10G

Other NIC cards: are for management network, cluster etc.

OSD: 4 xMicron 7450Max participating as OSD.

We tested each drive with the following command and got around 55K to 60K IOPS for block size 4k.

fio --ioengine=libaio --filename=/dev/sdb --direct=1 --sync=1 --rw=write --bs=4k --numjobs=1 --iodepth=1 --runtime=60 --time_based --name=fio

We have run fio simultaneously on 3 separate disks and got the above result.

Now, we have configured CEPH networking with (2 x25G Ports) in a full-mesh Routed with fallback mechanism by following the link - https://pve.proxmox.com/wiki/Full_Mesh_Network_for_Ceph_Server

As we mentioned earlier, these NIC ports are connected with 10G DAC. So effectively, we are getting 20g traffic when we do iperf test.

After setting up Ceph with 4 NVMe drives on each of the 3 nodes, and when we do the rados benchmarking, we get the following result, which is shocking:

rados bench -t 32 -b 4096 -p NVMepool1 60 write

The IOPS we are getting is around 45K. However, we have a total of 12 NVMe drives. If 55K IOPS we are getting from each. Therefore, the total IOPS is 55K x12 = 660K. Should we not get 20% to 30% of the total IOPS, which is 130K?

Why are we getting too low iops?

Let me share one more detail about the pool:

I tried making pool size from 3 to 2. but did not find any significant change.