Hi all,

I am currently trying to setup a CoreOS cluster using VMs inside of Proxmox. However I have a few hiccups and doubts, perhaps someone more experiences with proxmox can help with this.

Ok, so I use Proxmox to create 6 VMs on a 8-core dedicated server from online.net. The server has Proxmox VE 3.3 has the Host operating system. My idea was to use VMs and simulate the creation of machines (similar to how you setup a CoreOS cluster on DigitalOcean ), I used the bare metal iPXE instructions to run CoreOS VMs inside of Proxmox, the problem then comes when I have to access those VMs, since noVNC has no way to start a session with a SSHkey (AFAIK) I used the Host shell to try and SSH inside of the VMs... however there are a few things that are confusing me:

One is that every VM has the same IPv4 address 10.0.2.15, also my Bridged network settings did not work so I started every VM with NAT, I could not get the vmbr0 to turn active. How can I enable each VM to have its own IPv4 address?

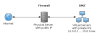

How can I access the VMs from outside of the proxmox host? I typically use mRemoteNG or Putty to root access servers from a windows laptop... is there anyway to set this up? Theoretically there would a SSH tunnel from mRemoteNG <--> Proxmox <--> VM CoreOS I don't know how to set this up, any ideas on how to achieve this would be great.

That last question brings me to the great topic of how to expose services from those VMs to the outside world, not just to the host machine... for instance I want to expose a nginx web server running inside a docker container on my coreOS VM to the outside world.

The main issue right now is because i am using iPXE to boot the machine and I cant get inside of the VM using SSH I can't install CoreOS to disk, and since I cant get inside of the machine I can't run Docker containers on it... any tips in the right direction on how to solve part or all of my current issues would be great!

I am currently trying to setup a CoreOS cluster using VMs inside of Proxmox. However I have a few hiccups and doubts, perhaps someone more experiences with proxmox can help with this.

Ok, so I use Proxmox to create 6 VMs on a 8-core dedicated server from online.net. The server has Proxmox VE 3.3 has the Host operating system. My idea was to use VMs and simulate the creation of machines (similar to how you setup a CoreOS cluster on DigitalOcean ), I used the bare metal iPXE instructions to run CoreOS VMs inside of Proxmox, the problem then comes when I have to access those VMs, since noVNC has no way to start a session with a SSHkey (AFAIK) I used the Host shell to try and SSH inside of the VMs... however there are a few things that are confusing me:

One is that every VM has the same IPv4 address 10.0.2.15, also my Bridged network settings did not work so I started every VM with NAT, I could not get the vmbr0 to turn active. How can I enable each VM to have its own IPv4 address?

How can I access the VMs from outside of the proxmox host? I typically use mRemoteNG or Putty to root access servers from a windows laptop... is there anyway to set this up? Theoretically there would a SSH tunnel from mRemoteNG <--> Proxmox <--> VM CoreOS I don't know how to set this up, any ideas on how to achieve this would be great.

That last question brings me to the great topic of how to expose services from those VMs to the outside world, not just to the host machine... for instance I want to expose a nginx web server running inside a docker container on my coreOS VM to the outside world.

The main issue right now is because i am using iPXE to boot the machine and I cant get inside of the VM using SSH I can't install CoreOS to disk, and since I cant get inside of the machine I can't run Docker containers on it... any tips in the right direction on how to solve part or all of my current issues would be great!

Last edited: